|

12/19/2023 0 Comments Splunk eval stats

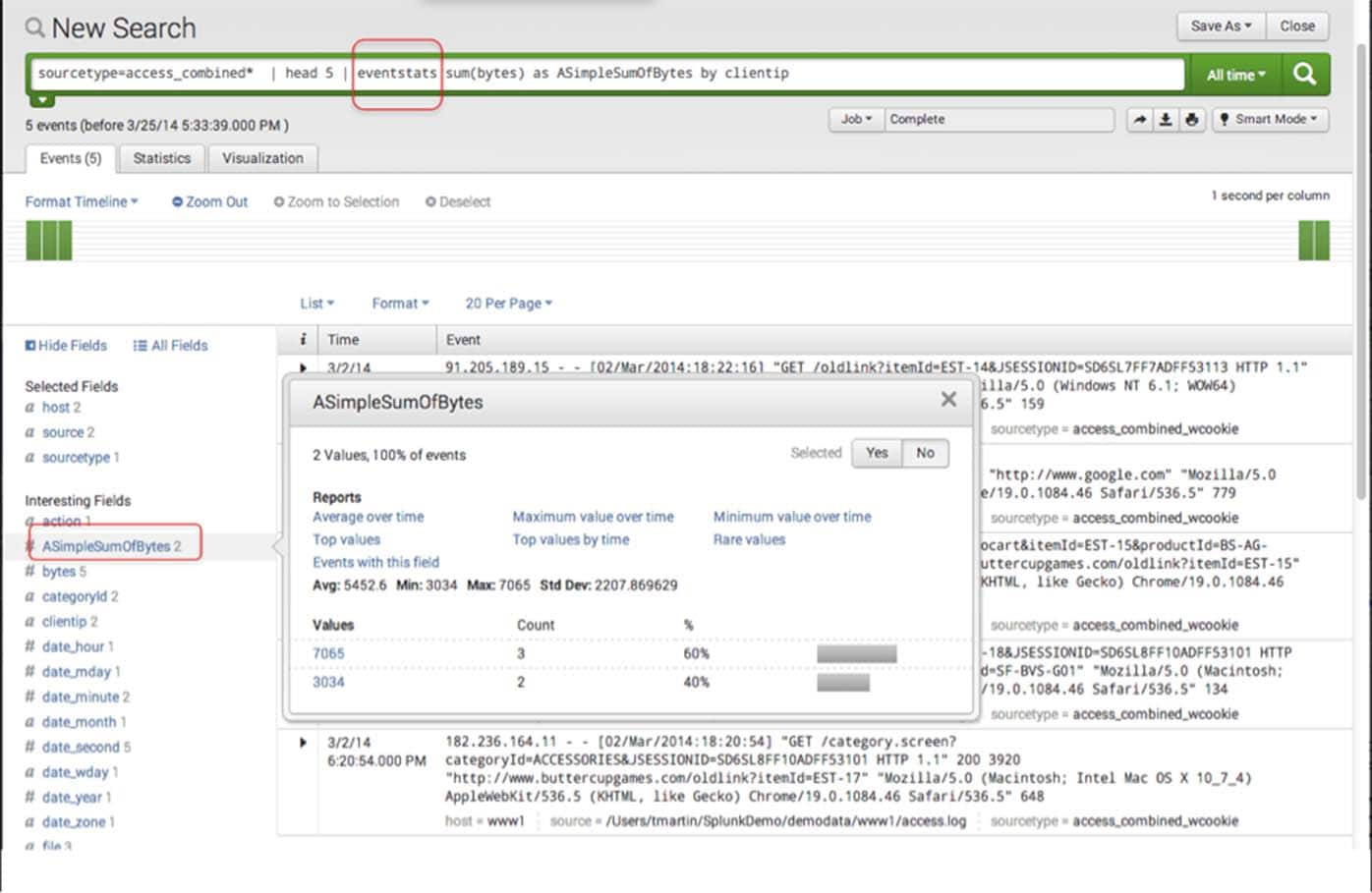

There are, however, a few rules that we can take into consideration to help us decide: 1. How do we determine what value of z-score to set for our threshold? The answer is a bit complicated. Given this information, we can do something like the following to calculate some statistics about the normal indexing of data, which we save into a lookup for future reference: If we can create a search that has around 30 data points per time span, we’ll likely have enough data to have an accurate sample. Since getting an average for all your data is likely impractical computationally, we can use this theorem to our advantage. The short version of the theorem states that as sample size increases, the mean (average) of the sample data will be closer to the mean of the overall population. Luckily, the Central Limit Theorem offers us some insight into how many events we need for a good sample. Our calculations could produce either a lot of false positives or miss some anomalous events as a result. If we choose too small of a timeframe, we might not get a representative sample of the data. When calculating the statistics mentioned above, we need to make sure the sample size we’re choosing accurately represents the data. if the field contains the number of bytes transferred in the event). You’ll want to use this for numerical data (e.g. Sum: provides a sum of all values of data within a given field.You’ll want to use this if you’re dealing with text data. Count: provides a count of occurrences of field values within a field.If it is high, the data is more spread out. If the standard deviation is low, you can expect most data to be very close to the average. Standard deviation is a measure of how variable the data is. Stdev: calculates the standard deviation of a numerical field.Average: calculates the average (sum of all values over the number of the events) of a particular numerical field.The bin/bucket commands (which can be used interchangeably) break timestamps down into chunks we can use for processing in the stats command. Below is a brief overview of these feel free to skip this section if you’re already familiar with them. There are several commands and subcommands that this technique uses. By the end of this article you should have a better familiarity with these statistical concepts and gain some intuition on the appropriate uses of such techniques. This article will offer an explanation of the standard score (also known as z-score) in statistics, how to implement it in Splunk’s search processing language (SPL), and some caveats associated with the technique. However, more subtle anomalies or anomalies occurring over a span of time require a more advanced approach. For some events this can be done simply, where the highest values can be picked out via commands like rare and top. One of the most powerful uses of Splunk rests in its ability to take large amounts of data and pick out outliers in the data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed